Sydney Business Insights

Megatrends watch

Facebook: a troll’s paradise

…in October 2019, all 15 of the top pages targeting Christian Americans, 10 of the top 15 Facebook pages targeting Black Americans, and four of the top 12 Facebook pages targeting Native Americans were being run by troll farms.

MIT Technology Review, September 2021

An internal Facebook report obtained by MIT Technology Review showed troll farms – professional groups that work in coordinated fashion to post provocative and false content – were running Facebook’s most popular pages for Christian and Black American content.

The farms were operating out of Eastern Europe (mainly Kosovo and Macedonia).

Troll farms harvesting Facebook users

In the lead up to the 2016 US Presidential election this content was reaching 140 million US users per month – 75% of whom had never followed any of the pages. This is according to the internal Facebook “Troll Farms Report”. Its full title – “How Communities are Exploited on Our Platforms: A final Look at the ‘Troll Farm’ Pages”.

Once posted, the content was being pushed by Facebook’s content recommendation algorithms that propelled troll pages into people’s newsfeeds.

The report’s author, Jeff Allen, is a former data scientist at Facebook. He was not the Facebook employee who leaked the document. Allen noted that the troll farms were targeting the same demographic groups as the Kremlin-backed Internet Research Agency (IRA) had during the 2016 election.

“This is not healthy,” Allen wrote. “The fact that actors with possible ties to the IRA have access to huge audience numbers in the same demographic groups targeted by the IRA poses an enormous risk to the US 2020 election.”

Facebook said it had taken action to address ‘inauthentic groups’, however the MIT Review reported that five of the troll farms pages remained active.

Bad company

According to the MIT report, Facebook itself recognises that content more likely to receive user engagement (likes, comments and shares) is also more likely to be considered ‘bad’. And still, the report alleges, Facebook’s algorithm ranks user’s newsfeeds according to what will receive the highest engagement. Moreover, when user’s friends comment or reshare posts on one of these pages, those users will see it in their newsfeeds. Bad information means growth for Facebook.

The Google difference

Allen points out that Facebook could run its Newsfeed differently – in fact he recommends adopting Google’s methodology. Google’s ‘Search Quality Rater Guidelines’ is its ‘first line of defence against disinformation and misinformation’. This process (known as the graph-based authority measure) assesses the quality of a web page according to how often it cites and is cited by other quality web pages to demote bad actors in its search rankings.

Stranger friends

Facebook says its mission is to help people to connect, but when the algorithms running those connections favour anonymous groups bearing propaganda and misinformation the power of individuals to control their networks can be undermined. Allen summed up the unfriendly disconnect:

“It will always strike me as profoundly weird that the largest Page on FB posting African American content is …run out of Kosovo. That’s so weird! And genuinely horrifying.”

Algorithms are manipulating individuals who have no oversight of how and why this is happening to them and are therefore powerless to do anything about it. Rather than being in control of their social media experience, Facebook users are subject to opaque connections. This tension is at the heart of the impactful technology megatrend: algorithms are getting faster and ‘better’ at many things – including connecting multitudes of people for manifold purposes. The speed of this transformation continues to challenge the ability of people to understand and reflect on what it means to be human in the midst of this accelerating megatrend.

Megatrends watch: amplified individuals

Subverting

Stable

Accelerating

This update is part of our Megatrends Watch series, which tracks developments that inform our six global megatrends….

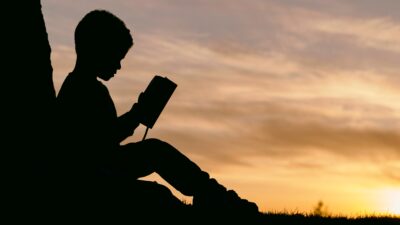

Image: Mark König

Sydney Business Insights is a University of Sydney Business School initiative aiming to provide the business community and public, including our students, alumni and partners with a deeper understanding of major issues and trends around the future of business.

Share

We believe in open and honest access to knowledge. We use a Creative Commons Attribution NoDerivatives licence for our articles and podcasts, so you can republish them for free, online or in print.