A keen-eyed Australian university student exposed a serious breach in Western defence security when he spotted in so-called heat maps, generated from the data of millions of users of a popular fitness app, the locations and activities of US and Australian military bases.

Not only did the aggregated ‘running’ data reveal secret US military bases in the Middle East and Africa [troops running the perimeter literally pounded out the location] – but it could also help bad operators track popular military transport routes between bases and even identify individual officers.

Sandra and Kai discuss this topic on The Future This Week podcast @6.59

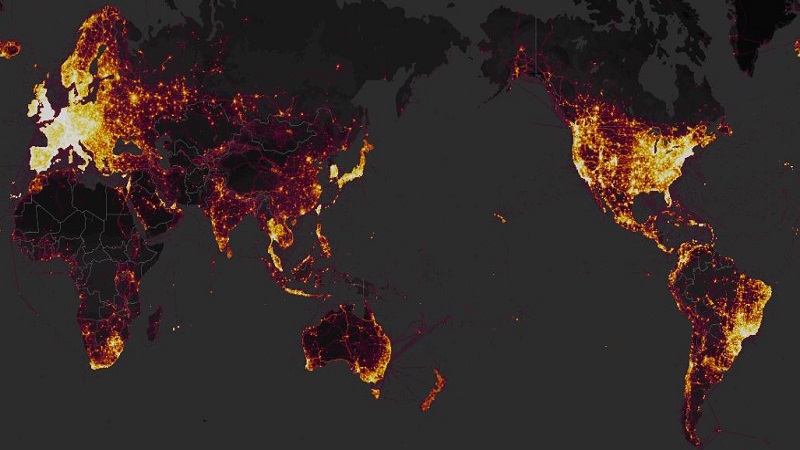

Strava is a fitness app running on smartphones and popular fitness trackers that records users’ exercise habits, running and cycling routes and shares that information with others in the digital ‘Strava community’. Millions of users generated trillions of GPS data points that Strava assembled into a global ‘heat map’ showing where users ran or cycled while the app was on.

The breach mainly relates to US and other Western military operations because most of the app users are Westerners – and apparently popular with US troops who leave the tracker on not only when exercising but when out on military exercises. Hence their aggregated data stands out in the heat map particularly in countries where fitness apps are not ‘a thing’ with the locals.

The breach is not the fault of the military or the individual users of the app or even Strava, none of whom could foresee the consequences of releasing the data, especially as it was anonymised.

But aggregated data – big data – reveals properties at a systemic level that is not visible at the individual data set. It is time for all of us to view privacy – our own and others – not as an individual matter but as a public good that needs to be protected much more carefully.

IT expert Professor Zeynep Tufekci has called for the onus to be shifted so companies have to ask users if they want to ‘opt in’ to sharing their data rather than the current bias whereby new users are required to ‘opt out’ if they want to keep their information private.

However such a shift might put into question the financial viability of many social platforms including giants such as Facebook, Google and You Tube. All of these data gathering applications, especially if they are free, are constructed around a business model that relies on selling access to their users (via advertising) or selling the user’s data to third parties.

The aggressive monetisation by social media platforms and the emerging evidence they are built to encourage addiction to their product has provoked a backlash with calls for their conduct and reach to be regulated.

However any regulation would have to also balance the fact that sharing data is often an intrinsic part of the pleasure that users want from the digital experience. Hence whilst Stava sells jogger’s data to cities and councils to do with it what they wish – Stava users enjoy testing themselves against other runners in their shared digital space.

Clearly a model that allows unregulated use and abuse of users’ data needs fixing and this year may be the time for a rebalancing – but will it come from the operators or require intervention by regulators?